Abstract

Contemporary information warfare constitutes a fundamental and evolving aspect of political strategy, increasingly influenced by advancements in technology that enhance the capabilities for influence and disruption. The rapid development and deployment of sophisticated tools have notably increased the reach, speed, and complexity of operations aimed at shaping perceptions and affecting decision-making processes. These developments present significant challenges to established political systems and institutions, particularly those grounded in open and pluralistic governance. As highlighted by Hunter et al. (2024), the expanded scale and intricacy of these operations underscore the pressing need for robust analytical frameworks and defensive strategies to safeguard societal resilience and institutional stability. This paper analyzes the dynamic strategies employed by major global powers within the information environment, highlighting the distinct approaches of the United States, Russia, and China (Hunter et al., 2024). It further explores the integration of AI in hybrid warfare tactics, including automated disinformation generation, cognitive manipulation, and cyber-enabled infrastructure disruptions. Emphasizing NATO’s doctrinal framework, particularly the Allied Joint Publication AJP-3.10 (NATO, 2023), the study underscores the alliance’s role in fostering resilience, ethical AI governance, and coordinated defensive strategies (NATO Allied Command Transformation, 2025). A fictional case study illustrates these multifarious threats and responses, revealing the profound vulnerabilities and necessary countermeasures democracies must adopt to safeguard institutional integrity and public trust in the age of AI-driven information warfare.

Introduction

Information warfare (IW) has evolved into a critical element of states’ security strategies (Hunter et al., 2024). It is defined broadly as the deliberate use of information to confuse, mislead, and influence adversary choices, and contemporary IW integrates aspects of electronic warfare, cyber-warfare, and psychological warfare to achieve strategic objectives (Bryczek-Wróbel & Moszczyński, 2022). The information environment, encompassing physical networks, communication systems, people, human networks, and devices like social media interfaces, has increasingly been used as a central point of achieving information superiority in modern conflicts (U.S. Department of the Army, 2018; Nye, 2019).

NATO’s Information Warfare strategy is principally articulated in its Allied Joint Publication AJP-3.10, “Allied Joint Doctrine for Information Operations.” According to this doctrine, information operations (Info Ops) involve coordinated and synchronized activities designed to create desired effects on the will, understanding, and capabilities of adversaries or other actors, supporting NATO’s overall mission objectives.

The doctrine emphasizes integrating Info Ops into all levels of military planning, highlighting the importance of understanding the information environment, which includes information itself, the individuals and systems processing it, and the cognitive and physical spaces involved. NATO focuses on both offensive and defensive information activities, such as disrupting an adversary’s command and control infrastructure or protecting friendly communication systems. Furthermore, the doctrine stresses the essential role of strategic communications (StratCom) and the emerging significance of cognitive warfare, which targets the perception and decision-making processes of individuals and groups. This comprehensive approach aims to achieve information superiority and cognitive resilience by ensuring coherent, focused, and politically sensitive operations that safeguard NATO’s decision-making and maintain initiative in complex multi-domain environments (NATO, 2023; NATO Standard AJP-3.10, 2024).

Major global powers exhibit distinct approaches to IWIO. The United States has traditionally viewed IWIO in military terms, largely within the Cyberspace domain, and tended to separate peacetime from wartime activities, limiting offensive capabilities when not in conflict (Hunter et al., 2024). US Joint doctrine defines information operations (IO) as the integrated employment of information-related capabilities (IRCs) during military operations to influence, disrupt, corrupt, or usurp adversary decision-making while protecting friendly capabilities (U.S. Joint Chiefs of Staff, 2012). IRCs are the tools and techniques, including military information support operations, military deception, electronic warfare, and cyberspace operations, used within the information environment (U.S. Joint Chiefs of Staff, 2012,). The US Army’s more recent concept of “Information Advantage and Decision Dominance” seeks to achieve information advantage over relevant actors using all military capabilities (Hunter et al., 2024,). However, the US faces challenges, including a lack of centralized data collection, and has poor inter-agency communication for IWIO analysis (Hunter et al., 2024). Other western democracies are not better in this matter.

In stark contrast, Russia views information as paramount and engages in offensive IWIO activities constantly, regardless of the channel, seeing it as perpetual conflict against adversaries like the United States in the international arena (Hunter et al., 2024; Swedish Defense Research Agency (FOI), 2015). Russian information warfare is a strategic, highly politicized matter, requiring coordination across many government agencies and conducted continuously in peacetime and wartime (Swedish Defense Research Agency (FOI), 2015). Their IW weapons are not only limited to cyber technology but also include various means and methods, ranging from system attacks to undermining society, broad psychological operations, and targeting decision-makers (Swedish Defense Research Agency (FOI), 2015). Russian strategic information warfare aims to disrupt leadership, mislead enemies, form desirable public opinions/perceptions, organize anti-government activities, and decrease the enemy’s will, often considering influence and technical aspects as a unified whole (Swedish Defense Research Agency (FOI), 2015).

China’s approach is influenced by Sun Tzu and Mao Zedong, centering on psychology and its “three warfares” (legal, psychological, and media operations) to manipulate legal regimes, affect public opinion, and undercut morale (Hunter et al., 2024). China employs IW operations preemptively, often combining tactics like electronic warfare and cyber-warfare, aiming for “informatization warfare” – applying information technology to all military operations (Hunter et al., 2024).

This article examines how these concepts manifest in practice, drawing on a fictional example to illustrate tactics and discussing the vulnerabilities and defensive requirements for democratic states in this dynamic and complex threat landscape, amplified by the increasing role of artificial intelligence.

The Escalating Role of Artificial Intelligence

Artificial intelligence (AI) technologies are profoundly transforming the international security and defense domain, particularly in cyberspace (Cristiano et al., 2023). AI empowers military and intelligence agencies with new solutions for predicting and countering threats, as well as conducting offensive cyber operations (Cristiano et al., 2023). As data and information have become more important, AI development has gained momentum (Cristiano et al., 2023).

AI enables significant efficiency and effectiveness in the execution of cybersecurity operations, saving time and resources. It can automate tasks such as generating knowledge about cyber threats and automating decision-making (Cristiano et al., 2023). Additionally, AI promises to help identify vulnerabilities through timely data interpretation (Cristiano et al., 2023).

AI technologies, including machine learning, neural networks, and deep learning, can significantly enhance capabilities for automating IWIO, especially in reaching mass audiences and influencing public perceptions (Hunter et al., 2024). AI increases the speed of IWIO operations and can have a wide range of applications, with its influence on IW tactics and techniques expected to grow (Hunter et al., 2024).

AI techniques can be utilized on material with identifiable patterns, such as text, audio, and video, to identify, learn from, and reproduce correlations (Moy & Gradon, 2023). AI-assisted automation is anticipated to be a major feature of IW in the next decade, applicable to both automatically releasing and automatically generating disinformation (Moy & Gradon, 2023). While automated release is simpler, AI can add realism to create the illusion of human behavior (Moy & Gradon, 2023). Automated generation requires data and the capability to produce content following a specific strategy (Moy & Gradon, 2023). AI can also identify and display human emotion (Moy & Gradon, 2023).

The weaponization of AI for IW operations finds a natural home in cyberspace (Moy & Gradon, 2023). These technologies are already advanced and known to be weaponized by malicious actors (Moy & Gradon, 2023). AI-supported disinformation campaigns pose a significant threat to democracy and society by targeting perceptions, specifically belief and trust (Moy & Gradon, 2023).

Offensively, AI is integrated into IWIO strategies by major powers like China and Russia (Hunter et al., 2024). It is used to increase social and political tensions and divisions, especially within the US, via social media (Hunter et al., 2024). China has used AI to manipulate public sentiment in Taiwan, international opinion on Hong Kong and Uyghurs, employing tactics such as eliminating negative press, generating propaganda videos, circumventing spam detection, and flooding social media with misinformation (Hunter et al., 2024). China also plans to use AI to monitor its own citizens’ information space (Hunter et al., 2024).

AI is used to identify and target “cognitive gaps” between social groups, exploiting contradictions and creating division, often by targeting human emotions (Hunter et al., 2024). Russia employs AI in its IWIO tactics to increase domestic tensions and undermine antagonistic agencies in Western states (Hunter et al., 2024). AI is a powerful tool for generating and amplifying disinformation, evident in the Russia-Ukraine conflict (Hunter et al., 2024).

Defensively, the United States primarily focuses on applying AI within its IWIO strategy (Hunter et al., 2024). Through collaborations, the US uses AI to identify, categorize, and counter potential international IWIO threats, including sifting vast amounts of data to identify misinformation, propaganda, and divisive content on social media (Hunter et al., 2024). Emphasis is placed on using AI to counter AI-driven deepfake technology that adversaries could use (Hunter et al., 2024). For example, the US Department of State employs AI for Disinformation Topic Modelling, Image Clustering, and Deepfake Detection to recognize and counter disinformation attempts more expediently (Hunter et al., 2024). AI is also used to protect critical infrastructure from cyber-attacks by using machine learning for attack indicators and generating AI defenses (Hunter et al., 2024).

NATO’s strategy emphasizes the responsible and ethical integration of artificial intelligence technologies to enhance the alliance’s resilience and response capabilities against AI-enabled disinformation and hybrid threats. NATO focuses on developing AI governance standards that ensure trustworthy, transparent, and inclusive AI systems to protect democratic institutions. The alliance recognizes the dual-use nature of AI and promotes a multi-pillar approach combining technological innovation, regulatory frameworks, and educational efforts to build societies resilient to manipulation and misinformation. NATO actively employs AI-powered tools for early detection and mitigation of AI-generated false content while supporting member states in fostering media literacy and critical thinking to counter cognitive vulnerabilities. These efforts align with NATO’s broader goal of safeguarding the information environment, maintaining transparency and accountability, and preserving democratic norms in an increasingly AI-driven security landscape (NATO Allied Command Transformation, 2025; European Defence Agency, 2025).

A Fictional Case Scenario

Although none of the fictional events mentioned below have anything to do with real people or events, each part of the events reflects the same or similar examples of the events in different geographies.

A promising politician is elected mayor of one of the biggest cities in the country. Coming from a well-known family, the politician’s educational background is just as brilliant. He graduated from one of the world’s most prestigious universities with an honors degree, and with the support of his family, he opened a law office and proved himself by winning important cases and eventually securing a significant place in politics over time.

He believes that a democratic social structure that prioritizes human rights will contribute positively to the solution of every problem. He argues that the healthy functioning of free market conditions is based on equality of opportunity. Therefore, he emphasizes the necessity of delivering public services to the most disadvantaged segments of society. Thanks to these views, he won votes from everyone, from the owners of capital to the poorer segments of society.

The politician, who quickly established himself as mayor, rapidly gained prominence and became one of the most popular candidates for the presidency of the country. As he increasingly shifted from local to national politics, his fundamental policies in international relations became a matter of curiosity. The politician, who believes that his country’s security depends on global stability, draws attention with his peaceful solutions to regional crises. As a result, his popularity has increased, but he has also become the target of various factions and has begun to encounter a series of problems, each of which appears to be unrelated. These problems test not only the politician’s credibility and ability to handle crises, but also call into question the core values he advocates. Some of the issues faced two years before the presidential election are listed below:

A car attack at a local social event kills twenty people, including children, and injures many others. The perpetrator of the attack is a migrant citizen with psychological problems who nevertheless has an educated and professional background. Initially, it is thought to be a hate crime because of his different religious background, but it is later revealed that he had a psychological disorder. Furthermore, the belief that adequate precautions were not taken and that the city administration was weakened spreads widely due to videos circulating on social media. Photographs of the mayor with the suspect at different events were also circulated.

As a result of the investigation, it is revealed that the videos circulating on social media were entirely fake, that all but one of the photos were fabricated, and that the suspect had no more contact with the mayor than a normal citizen would. Nevertheless, the politician’s image as the “perfect mayor” has been questioned, as has the public’s attitude toward immigration and tolerance of different religions and cultures.

The subway system, the city’s most important means of transportation, begins to experience frequent breakdowns. Growing dissatisfaction in society peaks when an accident occurs during a subway ride. In the accident, several wagons derail due to a signaling malfunction in the automation system, and many people are injured, although no fatalities occur. It is later determined that a much worse accident was narrowly avoided because the conductor noticed something was wrong and slowed the train in time; he was rewarded for his vigilance. However, the mishaps and the accident lead to accusations that the city has not invested enough in its infrastructure, that the mayor prioritizes national politics over his local responsibilities, and that his successes in local politics so far have been due to investments made by previous politicians.

The investigation reveals that the accident and disruptions occurred after the account of a staff member responsible for automation was compromised due to a phishing attack. The fact that these disruptions were spread over time, in a manner that reinforced negative public opinion, and that the accident happened immediately after reports that the mayor would seek the presidential nomination, strengthened the theory that the attackers were a hostile state-sponsored team. However, this theory could not be proven.

Following the announcement that he would participate in his party’s presidential primary, the mayor’s family life, professional background, and even his student years come under scrutiny, fueled by allegations circulated on social media. He finds it increasingly difficult to respond to the attacks from various propaganda sources and begins to see his popularity wane in public opinion polls. Although most of the allegations are eventually proven to be unfounded, the professional quality of the propaganda materials reveals he is being targeted by a team with significant resources. It becomes evident that the methods used in the attacks consider the sensitivities of society and generate artificial intelligence-supported content, which is then circulated strategically.

Case Analysis

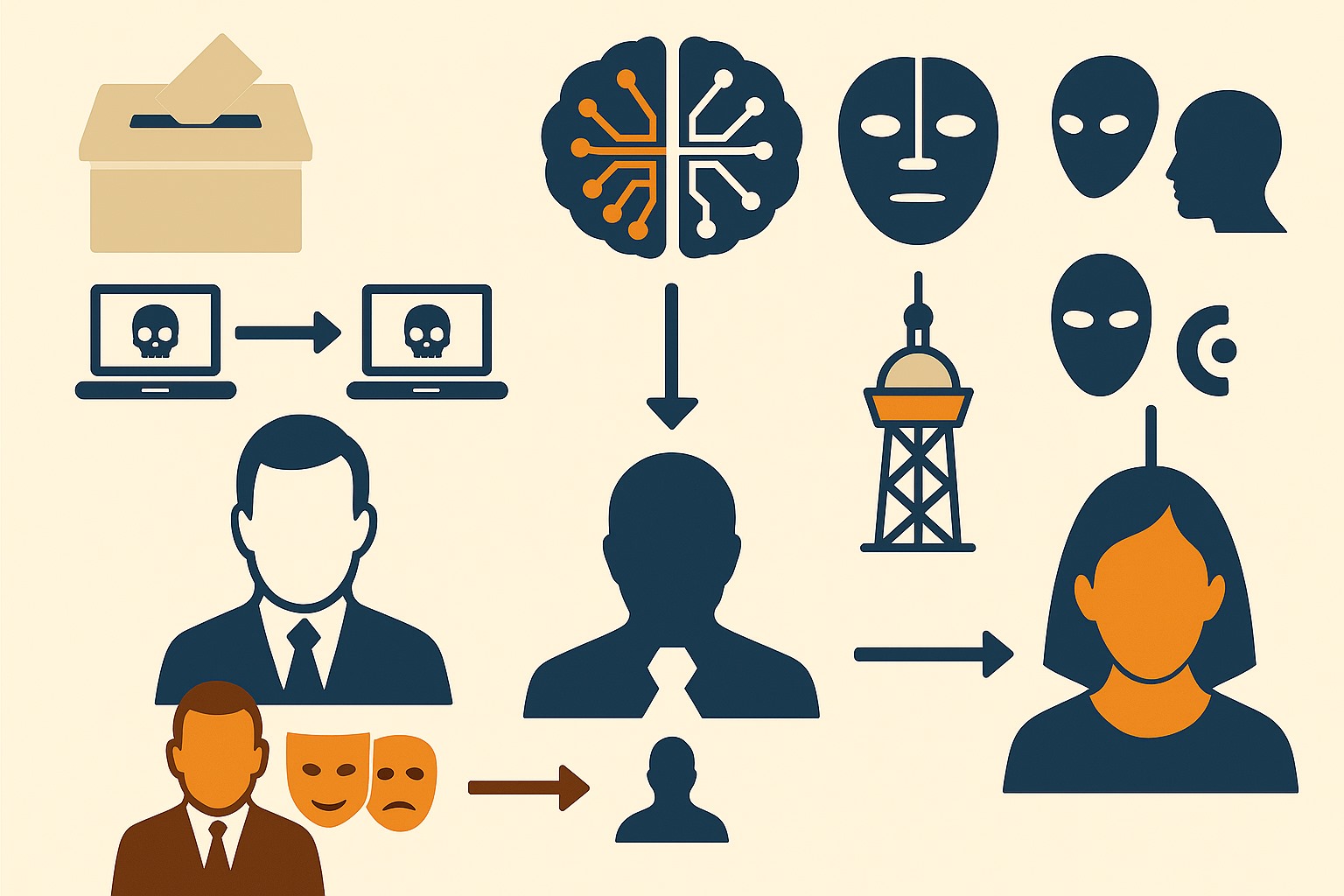

This fictional case scenario reflects real-world examples of IW tactics employed across various geographies. It highlights that contemporary IW can have local and global effects, operating through AI-supported or classical cyberattacks, disinformation, psychological operations, and potentially the sabotage of systems, often carried out by advanced persistent threats (APTs) or state-based actors with significant budgets operating strategically, which makes them an indispensable part of today’s hybrid warfare. The campaign deploys three phases of attack:

- Cognitive Destabilization: A fabricated social event involving a migrant suspect triggers viral misinformation, weakening public confidence in leadership.

- Infrastructure Sabotage: Cyber manipulation of subway systems via phishing induces service failures timed with election milestones.

- Character Erosion: Deepfake videos and falsified personal narratives fuel reputational decline, heavily amplified by coordinated bot networks.

Together, these operations reveal the holistic nature of modern IW, in which hybrid cyber, informational, and psychological elements converge to achieve strategic disruption.

Fig.2. AI-generated image

Vulnerabilities and Defensive Strategies for Democracies

The evolving security landscape presents complex challenges for Western democracies, often leaving them on the defensive against states that are adept at information control and interference (Defending Democracy from Information Warfare, n.d.). A key vulnerability for democracies is their inherent openness, which, while a source of attraction and persuasion, is also exploited and manipulated by authoritarian states (Nye, 2019). Moreover, democracies’ reliance on the rule of law, public trust, and commitment to truth further exacerbates this asymmetry (Defending Democracy from Information Warfare, n.d.).

Additional critical vulnerabilities include dependence on computer systems and interconnected digital infrastructure, which adversaries exploit using IRCs (U.S. Joint Chiefs of Staff, 2012). The growth of ICT-enabled and AI-enhanced systems increases military efficiency and effectiveness but also expands the range of attack and vulnerability (U.S. Department of the Army, 2018). The human element is a significant vulnerability, often the weakest link in system security (U.S. Department of the Army, 2018). Citizens face risks from easily available, manipulated content and a lack of competence in evaluating it (Monsees, 2021.). Social engineering tactics continue to exploit human psychology. Domestic factors such as democratic backsliding, societal polarization, and rising populism further challenge necessary cooperation among democracies (Munich Security Conference, 2024).

To counter these threats, a comprehensive defensive strategy involving the coordination of multiple government departments, close collaboration with NGOs, and the private sector is required (Nye, 2019). Such a strategy must include domestic resilience, deterrence, and diplomacy (Nye, 2019).

Key aspects of domestic resilience include:

- Strengthening the security of electoral infrastructure, hardening voter databases, and supporting local election officials with training and threat information (Nye, 2019).

- Raising the general level of cyber hygiene, including encouraging multi-factor authentication, particularly phishing-resistant MFA for all users and encryption, and improving government cybersecurity standards (Nye, 2019).

- Increasing public awareness of IW techniques and indicators of malign influence campaigns (“inoculating the public by exposure”) (Nye, 2019).

- Supporting investigative journalism and fact-checking (Nye, 2019).

- Securing critical infrastructure, including IT, IoT, and SCADA systems through threat intelligence, monitoring, contingency planning, and multi-layered security.

Deterrence measures involve making targets harder to attack by increasing costs for adversaries (deterrence by defence) (Nye, 2019). Besides securing sensitive areas like electoral processes and critical infrastructures, national and international defense organisations can also use sovereign cyber effects in response to attacks to punish attributed attacks and deter future ones.

Diplomacy is crucial for developing international norms, standards, and collective arrangements to protect critical infrastructure and services (Nye, 2019). International cooperation among like-minded countries is essential (Munich Security Conference, 2024), as is collaboration with the private sector and civil society (Nye, 2019). Bilateral agreements can help set expectations and curb dangerous operations (Nye, 2019). Collaboration on AI security policy and international standards for AI security is also seen as important. Cooperating with all stakeholders in the IT sector to enhance the security standards and empowering AI-supported open source threat intelligence is crucial for a comprehensive defense strategy.

Significantly, while governments must counter aggressive techniques, openness remains the ultimate defense (Nye, 2019). Measures that curtail openness and trust would be self-inflicted wounds (Nye, 2019). Imitating authoritarian practices like large-scale covert information warfare would be a mistake and ultimately a defeat, potentially undermining democratic soft power (Nye, 2019). Lowering democratic standards to match adversaries would squander a key advantage (Nye, 2019).

Conclusion

Contemporary IW is a persistent, strategic, and technologically advanced form of conflict, deeply integrated into statecraft. Major powers like the US, Russia, and China employ distinct strategies, with Russia and China demonstrating a continuous offensive posture across all domains. Artificial intelligence is rapidly transforming this landscape, enhancing the speed, automation, and scale of both offensive operations (disinformation generation, manipulation, targeting divisions) and defensive efforts (threat identification, countering deepfakes and cyber-attacks).

The fictional examples illustrate how psychological operations using disinformation and synthetic media, and technical disruptions via cyber-attacks, can be combined and strategically timed to achieve political objectives, often executed by sophisticated state-sponsored actors. These tactics exploit vulnerabilities in democratic societies, particularly their fundamental openness, dependence on digital infrastructure, and human susceptibility to manipulation.

Defending democracy in the cyber age is an ongoing challenging process that requires a comprehensive strategy (Nye, 2019). This strategy must balance robust technical and operational defenses, deterrence, and international cooperation with the core democratic values of openness and trust (Nye, 2019). Maintaining openness is paradoxically both a vulnerability and the ultimate defense (Nye, 2019). The threat landscape is dynamic and amplified by technology, necessitating continuous adaptation and collective action to build resilience. As a major alliance, NATO should have a crucial role in this threat landscape. As AI continues to evolve, not only states but also NATO must make crucial decisions about aligning their IWIO strategies with their values and security needs (Hunter et al., 2024). This requires reciprocal trust in allied members and speed in decision-making processes.

References

Bryczek-Wróbel, P., & Moszczyński, M. (2022). The evolution of the concept of information warfare in the modern information society of the post-truth era. Defence Science Review, (13). https://doi.org/10.37055/pno/152385

Cristiano, F., Broeders, D., Delerue, F., Douzet, F., & Géry, A. (Eds.). (2023). Artificial intelligence and international conflict in cyberspace. Routledge.

European Defence Agency. (2025). Trustworthiness for AI in Defence (White Paper). https://eda.europa.eu/docs/default-source/brochures/taid-white-paper-final-09052025.pdf

Hartmann, K., & Giles, K. (2020). The next generation of cyber-enabled information warfare. In T. Jančárková, L. Lindström, M. Signoretti, I. Tolga, & G. Visky (Eds.), 20/20 Vision: The Next Decade (pp. 95–103). NATO CCDCOE Publications.

Hunter, L. Y., Albert, C. D., Rutland, J., Topping, K., & Hennigan, C. (2024). Defense & Security Analysis. Advance online publication. https://doi.org/10.1080/14751798.2024.2321736

Monsees, L. (2021). Information disorder, fake news, and the future of democracy. Globalizations, 20(1), 153-168. https://doi.org/10.1080/14747731.2021.1927470

Moy, W. R., & Gradon, K. T. (2023). Artificial intelligence in hybrid and information warfare: A double-edged sword. In F. Cristiano, D. Broeders, F. Delerue, F. Douzet, & A. Géry (Eds.), Artificial intelligence and international conflict in cyberspace (pp. 47–74). Routledge.

Munich Security Conference. (2024). Munich security report 2024. Munich Security Conference. https://securityconference.org/en/publications/munich-security-report-2024/

NATO. (2023). Allied Joint Doctrine for Information Operations (AJP-3.10). Retrieved from https://info.publicintelligence.net/NATO-IO.pdf

NATO Standard AJP-3.10. (2024). Allied Joint Doctrine for Information Operations. NATO Standardization Office. Retrieved from https://mpsotc.army.gr/wp-content/uploads/2024/03/2.-AJP-3.10-EDA-V1-E.pdf

NATO Allied Command Transformation. (2025). Harnessing Artificial Intelligence in Defence (Brochure). NATO ACT. https://www.act.nato.int/article/harnessing-artificial-intelligence/

Nye, J. S. (2019). Protecting democracy in an era of cyber information war. Belfer Center for Science and International Affairs, Harvard Kennedy School.

Swedish Defence Research Agency (FOI). (2015). War by non-military means: Understanding Russian information warfare (FOI-R–4065–SE).

U.S. Department of the Army. (2018). The conduct of information operations (ATP 3-13.1).

U.S. Joint Chiefs of Staff. (2012). Information operations (Joint Publication 3-13).

Mehmet Calkayis is an IT Security professional specializing in cloud security. He served in the Turkish Army for 24 years, assuming technical and managerial roles at national and international levels. In addition to his voluntary administrative duties at the NAVI Research Institute, he contributes to academic research.